Getting value from users’ data (and time!)

3 November 2015 - Jessica Cameron

Survey frustrations and the value of data

I was doing a bit of online shopping recently, and I saw an invitation on one retailer’s website to participate in a short survey. Of course I’d do that! I’m always happy to give feedback to help make the user experience better.

However, the more I went on, the more frustrated I became and the less value I saw in their efforts – or mine.

A recap

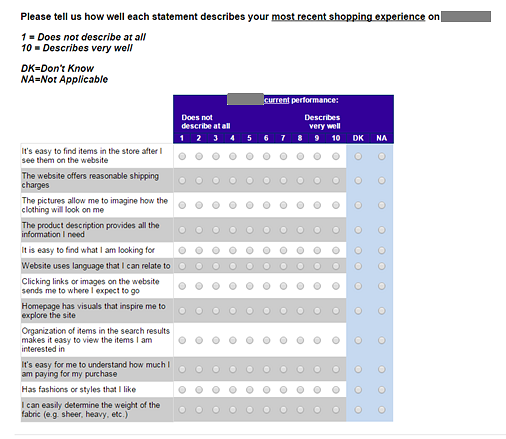

After answering a few preliminary questions, I was confronted with a page that looked like this:

How long is this really going to take me?

That’s a lot of questions. And that’s only one page. There were three pages of statements, with approximately twelve statements on each page, and for each statement I was asked to indicate how well it described my most recent shopping experience on this company’s website. There were a total of twelve answer choices for each statement: a scale from 1 to 10, and additional ‘don’t know’ and ‘not applicable’ options. Are you as lost reading this as I was trying to decipher what I was meant to do?

What I was thinking

Okay, let’s begin. First question: ‘It’s easy to find items in the store after I see them on the website’. Well, I’ve only been on the website, not in a ‘store’, during this ‘most recent shopping experience’. That’s easy: ‘don’t know’. Or is it not applicable, because it’s not a situation I was in? I’ll tick ‘not applicable’.

Moving on to the next question. ‘The website offers reasonable shipping charges’. Well, I saw that delivery is free on orders over £50, and that sounds reasonable. But come to think of it, I never actually saw how much shipping would cost if I didn’t manage to spend £50. I could select ‘don’t know’ – since, well, I don’t know what the shipping charges are. But I think I’ll give it a ‘4’, because I don’t think it’s very reasonable that they don’t tell me what the shipping charges are upfront. Or maybe it’s not that bad, so I can give it a ‘5’? Nah, I’ll go for a ‘4’.

I’m still not sure how they wanted me to interpret that question, though.

Can I just answer ‘don’t know’ to everything?

Moving a bit further down the page, I get to this statement: ‘Organisation of items in the search results makes it easy to view the items I am interested in’. Are they really asking their customers to make decisions about how search results are organised? Are they all usability professionals?

I may know a thing or two about search results, but I didn’t even use the search bar, so that’s another ‘not applicable’ for me.

Are these answers really telling them anything?

You know what? I don’t know if I have the energy to fill out this whole survey. I was also going to pop over to their bricks-and-mortar shop during my lunch break, but now I don’t want to think about them any more today at all.

What have they accomplished with this survey?

They’ve irritated a potential customer, and I would also argue that they’re not even likely to obtain many actionable insights as a result.

The questions are difficult to interpret, which means that different respondents’ identical ratings may in fact be tapping into very different aspects of the user experience (remember the many possible readings of the shipping costs question alone).

Offering so many response options for each question also makes it hard to choose among them. Considerable psychometric research has shown that for unipolar questions – the kind where one endpoint is labelled ‘not at all’ and the other ‘very’ – 5 scale points is the ideal number for capturing real differences without overwhelming respondents. Offering 10 scale points introduces noise to the data – and can make it look like there are statistical differences between a 4 and a 5, when really respondents may have chosen randomly between them.

Surveys should not sap your customers’ goodwill

I did stick with the survey, and it took me about ten minutes to fill out (like I said, there are a lot of questions). Every 1,000 responses this company gets will take respondents a total of at least 10,000 minutes – which is nearly a full week of customers’ time spent filling out this survey.

Customers who fill out surveys on retailers’ websites are doing them an enormous favour. The least our favourite retailers could do is respect our time, by 1) not making us work too hard and 2) designing studies that will lead to measurable improvements in our user experience. Is that too much to ask?

Short, simple, structured surveys

Surveys can tap into the opinions of large numbers of people very quickly, and thus yield meaningful insights, but only if they are done well. Putting together a survey should not be seen as an easy option, because it’s actually very difficult to design a good survey. When we do survey research, we take the time to make sure that it will add value to your organisation – and will not frustrate your customers.

Contact us to find out more about how our question sets result in meaningful and actionable insights.

You might also be interested in...

Consumer Duty Compliance: Measuring Outcomes That Matter

4 March 2025Explore how financial services can move beyond traditional satisfaction metrics to master outcome measurement for Consumer Duty compliance. Learn about Key Experience Indicators (KEIs) and strategic approaches with User Vision's expertise.

Read the article: Consumer Duty Compliance: Measuring Outcomes That MatterWelcome to User Vision: Shaping Incredible Experiences

29 January 2025Discover User Vision: Your partner in human-centred design. We shape incredible digital experiences through expert UX research, accessibility consulting, and service design. Learn how our insight-driven approach can transform your digital offerings.

Read the article: Welcome to User Vision: Shaping Incredible ExperiencesUser Vision Secures Place on G-Cloud 14 Framework ... again!

8 November 2024User Vision secures coveted spot on G-Cloud 14 Framework yet again, offering innovative UX research tools and cloud support services to enhance digital experiences in the UK public sector.

Read the article: User Vision Secures Place on G-Cloud 14 Framework ... again!