Capturing the emotional experience in user research

8 November 2023 - Kirsty Simpson

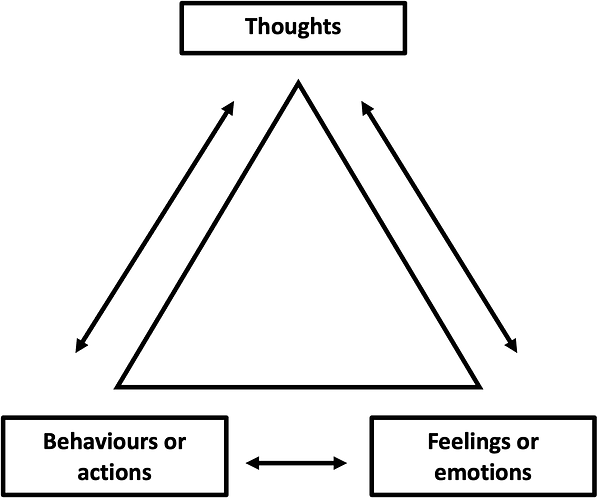

It has long been recognised in psychology, namely cognitive behavioural therapy, that how we think about situations affects our emotions (and the way we feel). And how we feel drives our behaviour and actions (Skyland Trail, n.d.).

Delving deeper into this concept, it is important to differentiate between emotions and feelings. Emotions are unconscious physical responses triggered by external stimuli, while feelings are the conscious, subjective interpretations of those emotions. Emotions lead to feelings, and both drive behaviour. They are two sides of the same coin.

Understanding the intricate relationship between the two is crucial in understanding our current approach to emotion in user testing research.

In this article, we will explore why the emotional experience of your product or service can impact a user’s decision to interact with it in the future, how we are currently capturing users’ emotional experience, and the exciting possibilities that arise from collaborating with artificial intelligence (AI) in the future.

Emotionally charged events drive behaviour

Poet and author Maya Angelou once said, “people will forget what you said, people will forget what you did, but people will never forget how you made them feel”.

This poignant quote reflects the fact that emotionally charged events linger in the mind.

If an event frustrates, upsets or disappoints a person, it can evoke negative emotions and feelings that are tied to the formation of a memory. The higher the level of significance of the event, and the higher the level of engagement or investment in the experience, the stronger the memory (isitedesign, 2015).

The formation of memories from emotions is succinctly explained by John Medina, a renowned author and scientist specialising on brain development:

The amygdala is chock-full of the neurotransmitter dopamine, and it uses dopamine in [the same way we use post-it notes]. When the brain detects an emotionally charged event, the amygdala releases dopamine into the system. Because dopamine greatly aids memory and information processing, you could say the post-it note reads ‘remember this’.

According to recent studies, this human memory system actually offers us a survival advantage; our brain remembers situations that have posed a threat in the past, and this allows us to successfully avoid these situations in future.

Similarly, when it comes to our own experiences of digital products, if we have a memorable experience - good or bad - the emotions and subsequent feelings associated with that memory will dictate our decision to interact with that product in the future.

According to Nobel laureate and renowned Psychologist, Daniel Kahneman, the decision to repeat an experience is based on the memory of the experience rather than the actual experience itself (Flacandji and Krey, 2020).

Therefore, we can understand that the emotional experience surrounding a digital product or service can make or break their decision to interact with it in the future. So, what are UX researchers currently doing to understand it?

How we currently understand the emotional experience of a product or service

A key part of understanding a users’ emotional experience of a digital product or service involves journey mapping; of understanding the pain points and areas of delight that occur at different stages of the user journey.

We can identify these points through qualitative research methods, such as usability testing; where we are able to hear what a user is thinking (through the ‘think-aloud’ protocol) and observe outcomes of an emotional feeling (through the action the user takes on a digital product).

But we are missing a key component when it comes to understanding users’ emotional experience, and ultimately when it comes to understanding their behaviour.

When a situation arises, we have thoughts about the facts of that situation; those thoughts trigger feelings (driven by emotion), and based on those feelings we engage in certain actions on a digital product:

thoughts (lead to) emotion-driven feelings (lead to) actions

During usability testing we can understand the ‘thoughts’ and the ‘actions’ components in this behavioural equation, but we do not have a complete understanding of how users’ feel.

And it is important to capture this, because understanding how users feel about a product helps paint a fuller picture around what might be driving user behaviour. (Oliver & Kasser, 2015).

Our capability for capturing the full emotional experience

So, we can understand that utilising the think-aloud protocol, and observing user actions on a digital product can be helpful in gauging how a user feels. But how successful are we, as UX researchers, in fully capturing how users feel about a product or service?

When we undertake qualitative research methods, such as usability testing, we need to have the capacity to do a number of things: to observe what people do, listen to what they say, provide test tasks, ask the right probes at the right times, get a sense of what people are feeling, and to understand the main pain points and areas of delight along the user journey.

With lots of things demanding our attention, we have less capacity to capture other emotional subtleties, such as changes in voice intonation and micro-expressions.

Yet, these emotional subtleties can actually play a large role in understanding how a user feels. In fact, according to the 7-38-55 rule of personal communication, words (or verbal communication) account for just 7% of our understanding of others’ moods, feelings and attitudes. And non-verbal messages - communicated through facial expressions (55%), and tone of voice (38%) - actually give us the greatest clues as to how someone feels.

So, how can we capture the full emotional experience?

The truth is, no one can truly understand how others subjectively feel about something, but we can get closer to it by understanding these emotional nuances.

So how can we capture them?

Enter Emotion AI.

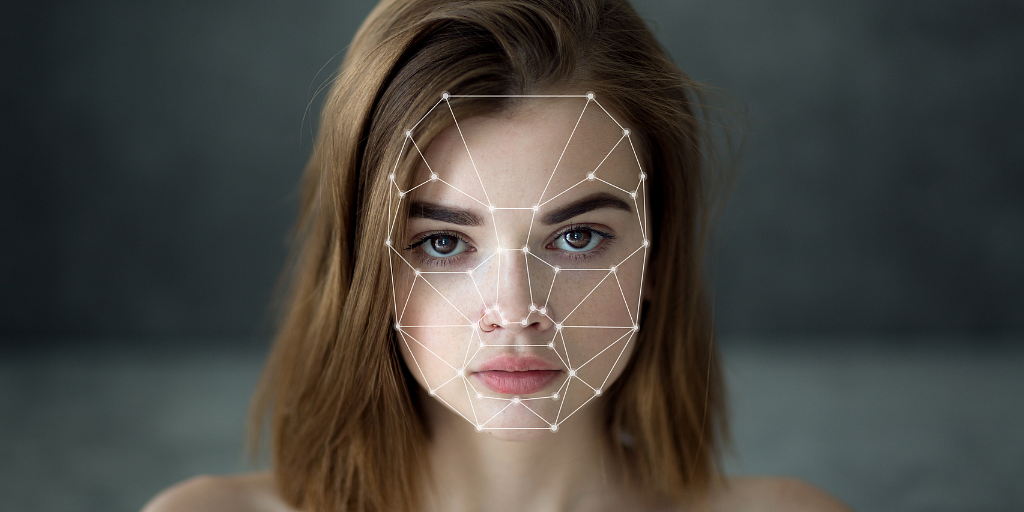

Emotion AI tools use automated video-conferencing technology, artificial intelligence (AI) and machine learning techniques to detect human emotions, and emotional nuances.

At present, these tools are currently being applied to research methods such as usability testing and interviews.

Emotion AI tools (like Odaptos(this will open in a new window)) record the users face and voice during usability testing or during interviews. They analyse facial expressions, tone of voice, and general sentiment along the digital user journey (or in response to any interview questions).

At the conclusion of each research session, any captured Emotion AI data is then fed into a dashboard where consumers can see a full, and granular, breakdown of the participant’s emotional experience.

Potential for collaborating with, and applying, Emotion AI in the future

Considering the application of such tools in the future, there is the possibility that we could supplement qualitative research data (e.g., from usability testing) with

quantitative Emotion AI data to better understand how users feel. This would allow companies to move forward with a much richer data set and greater confidence around design decisions.

However, application of emotion AI in this way, would have its place. Qualitative research only requires small sample sizes to be meaningful. Meanwhile, quantitative research requires large sample sizes to be statistically significant enough for companies to act on. If the quantitative output of Emotion AI were to be used, it could only be utilised alongside - very infrequent and - very large qualitative studies (involving multiple different qualitative research methods e.g., usability testing, interviews).

Another alternative avenue for future emotion AI application would be in a purely qualitative way. Emotion AI could be used in qualitative research if, by instead of looking at the statistical significance of the data, we look at its outputs (face, voice and sentiment analysis) in an interpretative and observational way, much like we observe users during usability testing and see where they look in eye-tracking. Used in this way, its outputs could be another supplementary data point to bolster findings and to help map the nuances of how users feel onto user journey maps.

When is it likely that Emotion AI could be useful in UX research

Emotion AI has already been utilised in such companies as Odaptos(this will open in a new window), Kairos(this will open in a new window), Hoomano(this will open in a new window) and Affectiva(this will open in a new window). However, the quality and accuracy of it is fairly primitive at the moment.

While AI has the potential to process and analyse vast amounts of data quickly, it still lacks the inherent human qualities of empathy, intuition, and contextual understanding that contribute to accurate emotion detection (Hunkenschroer & Kriebitz, 2023). Achieving a level of emotional intelligence comparable to humans will likely require significant advancements in AI research. It could be several years or even decades before AI systems reach a level where they consistently outperform humans in detecting emotions in user research (Market Business News 2021, Odsc 2020)

Yet, projected adoption of this AI generated technology is very high and as such its implementation in user research is likely to be on the horizon. The global emotion and detection recognition market is expected to grow exponentially; “it is projected to grow from $23.5 billion in 2022, to $42.9 billion in 2027” according to Revenue Impact Firm, Markets and Markets.

With increased supply of such services, will come increased demand and expectation of its use.

As AI advances over the years, it is likely that UX researchers will increasingly collaborate with AI and map AI emotion analysis outcomes onto user journey map deliverables (Userlytics, 2023). Richer emotion-based data insights from AI could be used to give greater credence to significant emotional events during the user journey; that we can act on, and design for.

In Summary

UX Researchers may not always have the capacity to fully capture the nuances of an emotional experience surrounding a digital product.

However, the emergence of Emotion AI presents an opportunity for UX researchers to collaborate with AI to do just this.

As the global market for emotion detection and recognition continues to grow, the potential for Emotion AI to improve the overall user experience is immense. With continued advancements in AI, the future of UX research looks exciting.

Further reading

https://www.gla.ac.uk/news/headline_915537_en.html(this will open in a new window)

Key References

Journal Articles:

Flacandji, M and Krey, N. 2020. Remembering shopping experiences: The Shopping Experience Memory Scale. Journal of Business Research, Volume 107, 279-289. Available at: https://www.sciencedirect.com/science/article/abs/pii/S0148296318305204(this will open in a new window)

Hunkenschroer, A.L., Kriebitz, A. Is AI recruiting (un)ethical? A human rights perspective on the use of AI for hiring. (2023) AI Ethics 3, 199–213. https://doi.org/10.1007/s43681-022-00166-4(this will open in a new window)

Nairne, J. S., Pandeirada, J. N. S., & Thompson, S. R. (2008). Adaptive Memory: The Comparative Value of Survival Processing. Psychological Science, 19(2), 176–180. https://doi.org/10.1111/j.1467-9280.2008.02064.x(this will open in a new window)

Blog:

Userlytics. (2023). AI for UX Research and Design. Userlytics Blog. Retrieved from https://blog.userlytics.com/ai-for-ux-research-and-design/(this will open in a new window)

Websites:

MarketsandMarkets. (2019). Emotion Detection and Recognition Market by Technology (Natural Language Processing, Machine Learning, and Others), Software Tools (Facial Expression Recognition, Speech & Voice Recognition, and Others), Services, Application Areas, End Users, and Regions - Global Forecast to 2020. Retrieved from https://www.marketsandmarkets.com/Market-Reports/emotion-detection-recognition-market-23376176.html(this will open in a new window)

World of Work Project (n.d). Mehrabian’s 7-38-55 Communication Model: It’s More Than Words. Retrieved from: https://worldofwork.io/2019/07/mehrabians-7-38-55-communication-model/

Book:

Medina, J. (2014). Brain Rules: 12 Principles for Surviving and Thriving at Work, Home, and School. Pear Press.

Articles:

Odsc. (2020). The Evolution of AI Emotion and Sentiment Analysis. Medium. Retrieved from https://odsc.medium.com/the-evolution-of-ai-emotion-and-sentiment-analysis-611b118bd983(this will open in a new window)

Market Business News. (2021). The Convergence of Emotional Intelligence and AI. Retrieved from https://marketbusinessnews.com/convergence-emotional-intelligence-ai/306019/(this will open in a new window)

Skyland Trail. (n.d.). 4 Differences Between CBT and DBT and How to Tell Which is Right for You. Retrieved from https://www.skylandtrail.org/4-differences-between-cbt-and-dbt-and-how-to-tell-which-is-right-for-you/(this will open in a new window)

Oliver, R. L., & Kasser, T. (2015). The New Science of Customer Emotions. Harvard Business Review. Retrieved from https://hbr.org/2015/11/the-new-science-of-customer-emotions(this will open in a new window)

Video:

isitedesign (2015). Aarron Walter of MailChimp on Designing Emotional Experiences [Video file]. Retrieved from https://www.youtube .com/watch?v=V4mvLTos_-k

You might also be interested in...

Kano as a Lens for Innovation and Competition

4 June 2026How Kano explains innovation, disruption and competitive advantage.

Read the article: Kano as a Lens for Innovation and CompetitionCreating Space for Surprise in User Research

1 June 2026Good user research creates space for surprise - exposing blind spots, challenging assumptions and helping teams make better decisions.

Read the article: Creating Space for Surprise in User ResearchApplying the Three Lenses Model: How to Integrate MR, CX and UX Research

21 April 2026Knowing the three lenses is one thing — here's how to actually use them together without restructuring your entire research function.

Read the article: Applying the Three Lenses Model: How to Integrate MR, CX and UX Research